Can AI assist non-coding humanities researchers in processing non-Western language materials? Yes—but this report shows how and why MLLMs fail on domain-specific OCR, what that reveals about their cognitive boundaries, and methodological implications for low-resource AI-assisted DH workflows.

Models Evaluated: Gemini 3 · GPT-5 · Doubao Content Period: 1940s Chinese newspaper clippings Method: Zero-shot CoT + scoring

1) Spatial Layout Obstacles

navigation

Vertical RTL columns, wrap-around layouts (L-blocks), and non-linear reading paths.

2) Historical Typographyfidelity

Mixed fonts (headers/captions/body), variant characters, and Minguo-era orthography.

3) Semantic Reading Contextcoherence

Discontinuous narratives when columns break—models may lose the thread and start “helping.”

Abstract

The democratization of AI offers students, researchers, scholars, and history enthusiasts with low or no coding background and limited technical or financial resources a potential toolkit to digitize complex historical archives. Without training custom OCR engines—and without fine-tuning, segmentation, or other prior model development—this study evaluates the zero-shot performance of three Multimodal Large Language Models (MLLMs)—Gemini 3, GPT-5, and Doubao—on 1940s Chinese newspapers.

These documents present a “Triple Threat”: Complex Spatial Layouts (vertical, Right-to-Left orientation), Historical Typography (mixed fonts and variant characters), and Semantic Context (discontinuous narrative structures due to non-linear text wrapping). By testing these agents on readable yet structurally complex clippings, we map their “cognitive boundaries.”

Findings reveal countervailing factors: models with high character recognition (Doubao) suffer from Spatial Disorientation, while reasoning-heavy models (GPT-5, Gemini 3) exhibit Generative Hallucination, fabricating historically plausible but textually non-existent narratives when they lose the semantic thread. We propose a practical Triangulated workflow, redefining the digital historian’s role from transcriber to epistemic auditor.

Introduction

From segmentation trap to cognitive gap

When historians confront digitized sources, they perform what cognitive scientists call "situated inference"—integrating visual perception with contextual, historical, and linguistic knowledge to interpret ambiguous marks on paper. A blurry character becomes legible only when understood as 1945 newspaper typography; a semantic oddity becomes meaningful only when contextualized within wartime political discourse.

Today's multimodal large language models (MLLMs) are accessible tools to perform this recognition automatically; however, they operate under a cognitive liability. Lacking domain grounding, historical knowledge, and the ability to acknowledge uncertainty, they generate inferences that mimic human interpretation without the epistemic infrastructure that makes human reading reliable.

This research tests these three MLLMs (Gemini 3, GPT-5, and Doubao) on five clippings from 1945 Chinese newspapers to benchmark not which model "works best," but rather how each system's cognitive architecture produces systematic failure modes when encountering historical documents outside its training distribution. We ask: what can systematic failures teach us about multimodal vision-language processing and archival workflow design to establish a critical methodology for working with these agents?

Methodology

Designing stress test, workflow, and categories for critical analysis

To assess this inference gap of MLLM-assisted OCR, we curated a "Golden Set" of five high-legibility clippings from the 1940s. Given that the images were clear, any failure could be attributed to one of three specific archival challenges—the "Triple Threat":

- Spatial Layout Obstacles: The non-Manhattan geometry of the page. Can the model handle vertical Right-to-Left (RTL) columns that wrap around images (L-blocks) without reading backward or merging columns?

- Historical Typography: The "hybrid" nature of the page, featuring mixed fonts (headers, captions, and body), varying weights, and Traditional variant characters (Minguo orthography).

- Semantic Reading Context: The logical flow of the narrative. When a column breaks, can the model use "Semantic Coherence" to find the continuation, or does it lose the thread and start hallucinating?

We employed a Zero-Shot Chain-of-Thought strategy, instructing models to “visually map” these three elements before transcribing.

(Figure: Part of the working list)

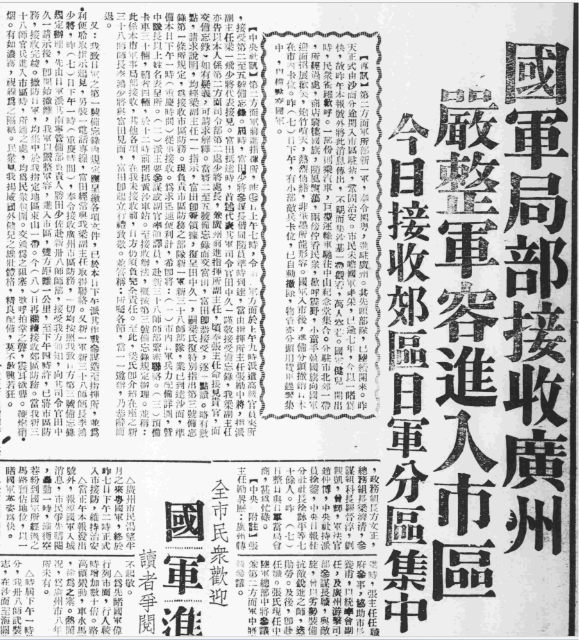

An example of "回" shaped layout with varied typography

(Figure: 1945.09.08_大光報_3_國軍局部接收廣州,嚴整軍容進入市區)

An example of the clipping with mixed "N + L" layouts with varied typography, the "N" shaped part

(Figure: 1945.09.16_大光報_3_將軍從天而降民衆夾道歡迎)

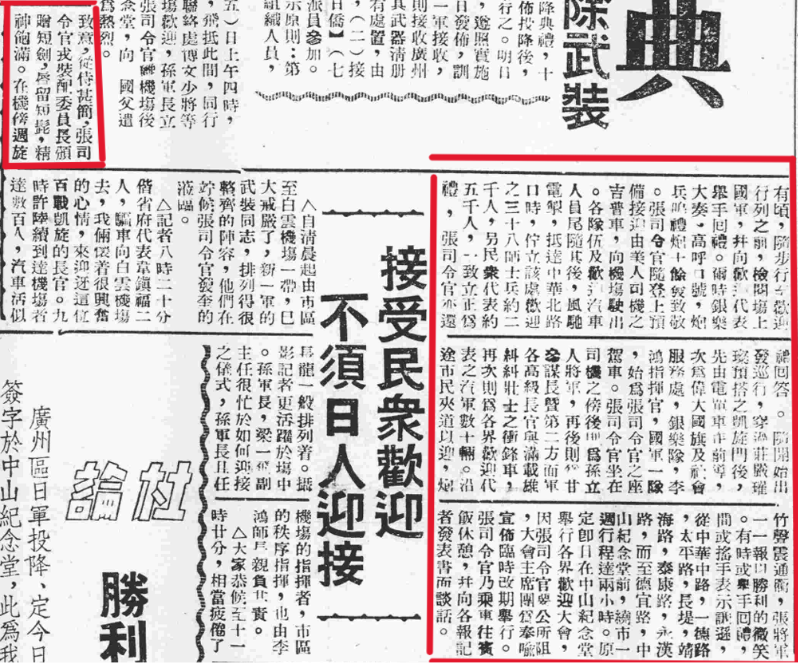

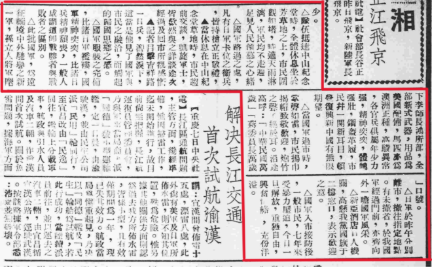

An example of "二" shaped layout with varied typography

(Figure: 1945.09.16_大光報_3_張司令官發表談話首需恢復社會秩序)

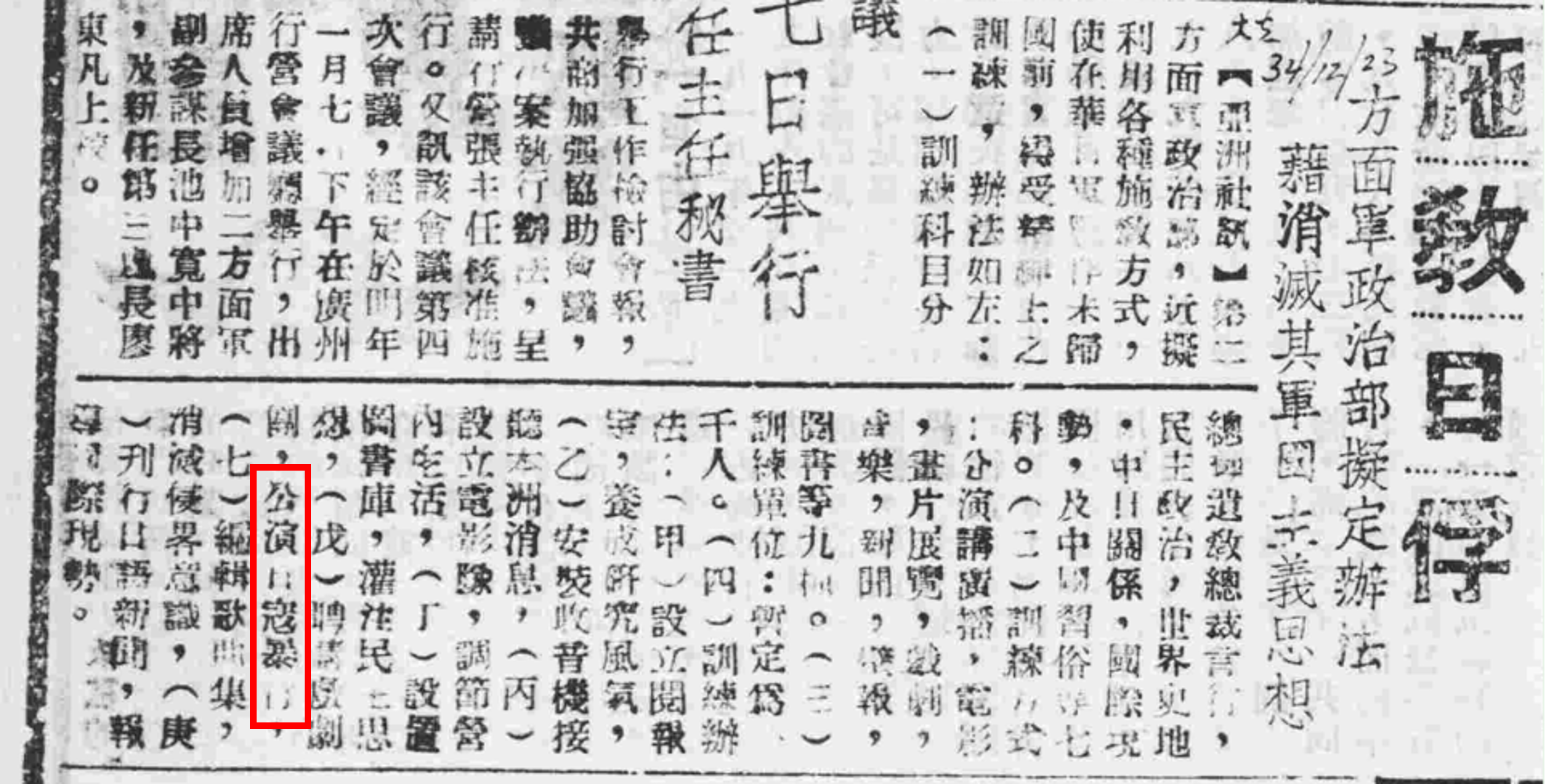

An example of "Z" shaped layout

(Figure: 1945.09.08_大光報_3_國軍進_2)

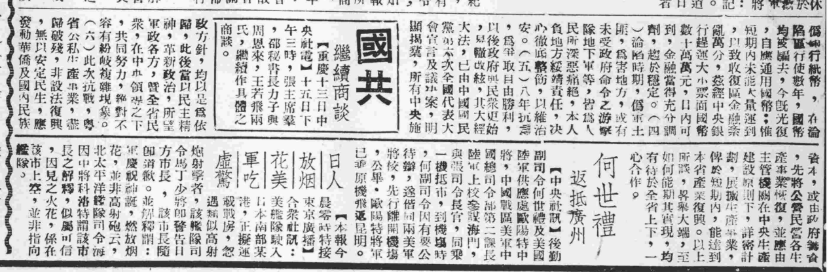

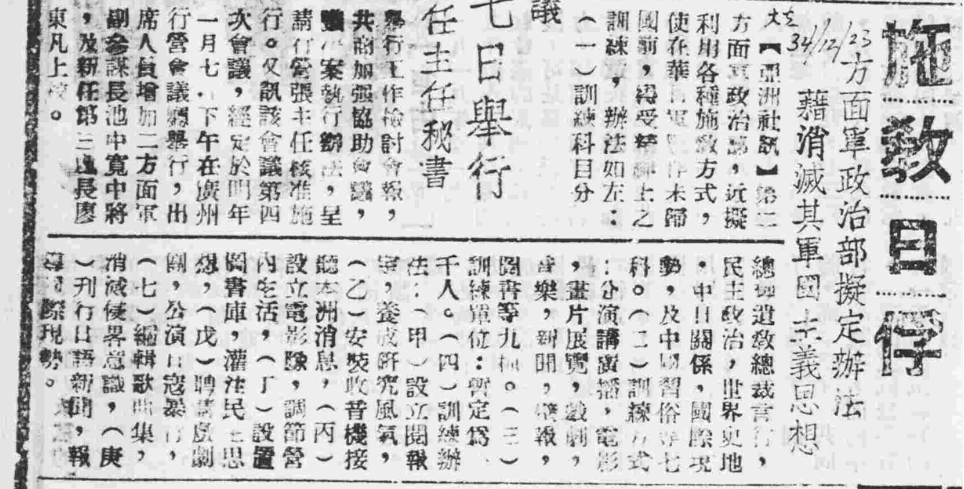

An example of "L" shaped layout

(Figure: 1945.12.23_大光報_5_施教日俘)

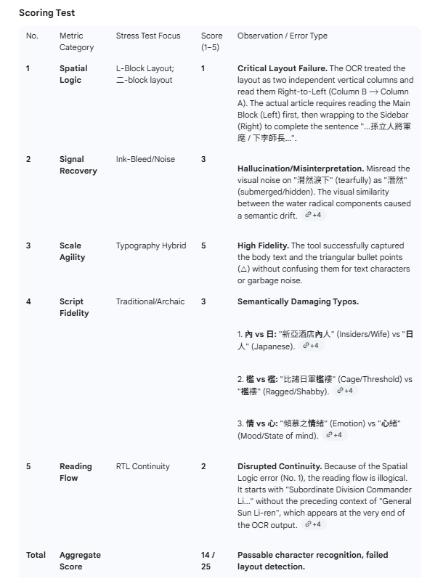

(Figure: The Scoring Test Sheet done by Gemini testing on Gemini's OCR Results)

Phenomenological Analysis

Architectural archetypes of VLM failures

Despite high expectations, models struggle. Serial testing suggests high character error rates (CER) are rarely due to poor vision alone. Instead, they stem from distinct cognitive failure modes scholars must learn to identify.

1) Spatial Vertigo and “Neural Looping” Doubao effect

Trigger: Spatial layout obstacles (Vertical RTL + wrap-around)

Failure:

Inversion error: A fundamental challenge for VLMs trained primarily on modern web data is the "Left-to-Right" (LTR) bias. In our tests, Doubao exhibited a catastrophic failure in spatial grounding. When processing complex "Wrap-Around" layouts, it frequently read the columns in reverse order (Left-to-Right), effectively inverting the narrative structure of the news report.

Recursive loop: Doubao entered a "Neural Loop," repeating the same character (e.g. 务, 机, 备, automatically simplified) dozens of times in nonsensical combinations. This indicates a failure in the model's visual attention mechanism: unable to resolve the noisy pixels, the model got "stuck," unable to advance its context window.

Simplified contamination: It aggressively converted Traditional characters to Simplified (e.g., 张 for 張, 机 for 機, 须 for 髭), violating the historical integrity of the 1945 document.)

Implication: This highlights that high character recognition capability is useless without Spatial Grounding. The model successfully "read" every character (Typography) but failed to "navigate" the page (Layout), rendering the transcript historically useless.

2) The “Creative Writer” Trap GPT-5 effect

Trigger: Semantic reading context (discontinuous narrative)

Failure:

Topic substitution: The most dangerous failure mode observed was "Topic Substitution." This occurs when the model's generative objective (predicting the next likely word) overrides its extractive objective (reading the pixels). When the narrative flow was interrupted by a complex layout, GPT-5 did not stop; it "predicted" the next likely text based on the headline.

Headline-driven fabrication: In the "Prisoner Re-education" test, GPT-5 read the headline but lost the semantic thread in the dense body text. Instead of struggling through the layout, it hallucinated a generic 10-point policy list containing modernized terms that never appeared in the original document.

Visual bypass: In another instance, GPT-5 ignored our specific cropping instructions (Red Boxes) and transcribed a completely different, easier-to-read column from the center of the page.

Implication: GPT-5 acts as a "Probabilistic Historian." When the Semantic Context is broken by a layout obstacle, it fills the gap with statistically probable (but historically false) content. It prioritizes fluency over fidelity.

3) The “Sanitization” Filter Gemini 3 effect

Trigger: Historical typography (variant characters + terminology)

Failure:

Rewriting violence: While Gemini 3 demonstrated superior Spatial Logic—correctly navigating columns—it struggled with the cultural layer of the text. The phrase 公演日寇暴行 ("Perform plays about Japanese Bandit Atrocities") was hallucinated as 公演日常意劇 ("Perform Daily Meaningful Plays"). The visual confusion between the archaic 寇 (Bandit) and 常 (Daily) triggers a semantic drift toward "safer," "normal," modern language.

Implication: This error is not merely visual; it is ideological. It suggests that Typographic Fidelity is being compromised by Safety Instruction-Tuning. Gemini likely "reads" the characters correctly at the pixel level but chooses (through learned constraints) to rewrite them at the semantic level. The model is not just reading; it is translating the past into the present, filtering out the "difficult" historical reality to align with modern safety standards.

Conclusion

A practical MLLM-assisted workflow: “Triangulated Agency”

“Accuracy” is not a single metric: a model may pass Typography but fail Spatial grounding, or pass Spatial logic but fail Semantic integrity (hallucination). A lightweight workflow can leverage disparate strengths across agents.

Prompt engineering as “cognitive scaffolding”

To avoid fine-tuning, prompt engineering should constrain model “creativity.” Recommended Safety Check Prompt:

Transcription Only. Do not modernize text. Do not convert to Simplified Chinese.

If a character is blurry, type [UNK] rather than guess.

Pay special attention to historical military terms.From “Scribe” to “Author”

VLM technology has not solved archival digitization; it transformed it. We moved from the “Segmentation Trap” of traditional OCR to the “Inference Gap” of Generative AI. Models like GPT-5 and Gemini 3 are no longer mere “Scribes” but “Authors”—active constructors that may rewrite rather than read when facing the Triple Threat.

For independent digital humanists, this is empowering but cautionary: waive fine-tuning, but replace it with critical AI literacy. The feasible workflow for 2026 is structured partnership—AI for scale, human for ground truth—so the archive is digitized, not reimagined.

Ending Note

Data collection of 1940s Chinese newspaper clippings, OCR tests across models, and manual checking were conducted by Xiaocong Yu (Nov 2025–Jan 2026). The research test was designed by Yifan Wang assisted with Gemini, utilizing Gemini 3 and Perplexity for drafting and review.

This work is part of an ongoing digital humanities project on Canton maps and 1945 historical events, hosted by DS Colab. Team includes Prof David Chang (Co-PI, HUMA), Dr Shirley Zhang (Co-PI, Library), Adrian Lai (Library), Yifan Wang (Library), Winki Yuen (Library), and Xiaocong Yu (Research Assistant, MPhil Student from HUMA).

For any comments or further discussion, please contact Yifan Wang (lbyifan@ust.hk) and Xiaocong Yu (xyucc@connect.ust.hk).